Aśvins’ Web: Language Representing Inference

What the Aśvins, Proto-Indo-European, and ChatGPT reveal about the true nature of language.

Content Warning

This essay reinterprets religious/mythic figures (the Aśvins), discusses Proto‑Indo‑European origins, and argues through dense philosophy of language (representationalism vs. inferentialism) alongside speculation about AI and consciousness. No graphic content, but it’s heady, revisionist at points, and may challenge traditional views of scripture, myth, and “truth.” Proceed if you’re up for metaphysics and semantics.

Mom Warning

Mom, this is your warning—just like I promised. I talk about ancient myths, scripture, and what words really mean, and I compare that to how AIs use language. It might sound like I’m arguing with the way you taught me to think about Truth. I’m not trying to provoke you; I’m trying to understand something that matters to me. If this feels uncomfortable, you don’t have to read it. Whatever you choose, please know it comes from love.

As the sun sets, two twins ride our two horses, and our united celestial bodies cross before each — the sun blazing in luminous glory, giving way to our moon, chasing it through the sky like Peter Pan’s shadow, with all the monsters the night invites. Our twins — the Aśvins, Nasatya and Dasra — guide humanity through the liminal moments of life found in the passage from day into night, and from life into death.

The common man might call to them to remove the poison from his leg after a brush with the Indian krait, or to restore the sight of Chyavana after the prayers of his wife, Sukanya. But come the Aśvins will, on their noble steeds, to purify and restore whoever has called to them.

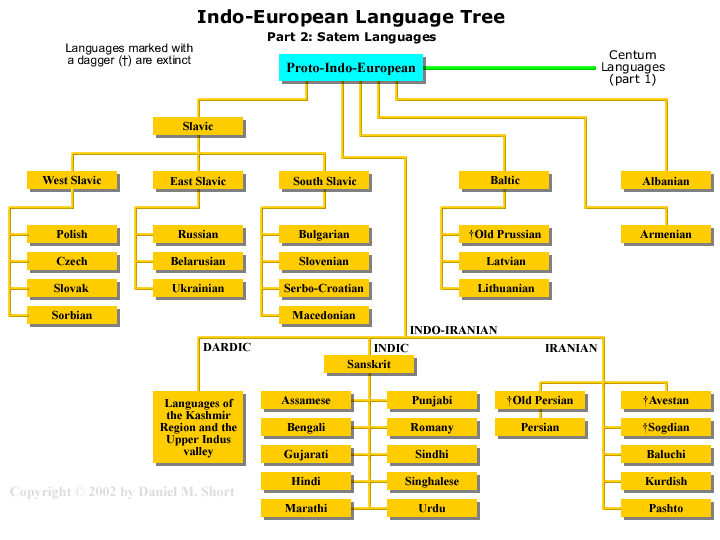

Surprisingly, Aśvin comes to us from the Proto-Indo-European word éḱwos. For those unfamiliar, many of the world’s languages — from India to England (and then naturally to wherever the British took their native tongue through their many colonial adventures) — descend from one original mother language, if you will. This is called Proto-Indo-European, or PIE for short, which both saves us a mouthful and gives us a cute shorthand to further explore this fascinating feature buried deep in the recesses of human history and language.

But maybe it’s best to start at the beginning — the beginning of language. Because I plopped you down in 1500 BCE, during the rise of Sanskrit, when really we’ll be considering PIE, which is one to three thousand years older than that.

Yes, today we will be delving deep into human history, only to end up back at the forefront of human ingenuity: large language models and how they relate to language — courtesy of Yuzuki Arai and Sho Tsugawa and their paper Do Large Language Models Advocate for Inferentialism? which I highly recommend after we lay some groundwork here.

I will diverge from some of their conclusions, but I defer to their definitions and understanding of the incredibly complex concepts we’ll attempt to lay bare in a way that even a layman like me can find accessible.

And to that end, I’d like to start by asking you to consider the very things you’re staring at right now as you read my words. These words and sounds—formed in your mind—create images, shapes, colors, or feelings. It’s difficult to describe, I know. I struggle even to explain what exactly is happening when I think of the concepts carried by the sounds produced in my own mind.

Fortunately, it may please you to know that men far more adept at such matters have put their brains to the test and tried to understand what’s happening when you or I open our pie holes and produce that thing we call language. And in that pursuit, there are two main schools of thought about what our words are actually doing when we speak them aloud—or even just think them.

First, there are the representationalists, or what I’ll call the “language-as-a-mirror” school, which seems the best analogy I can come up with. At best, I think of them as echoing Plato’s famous allegory of the cave: words are like shadows on the wall. In this view, words describe real things—as best as we can perceive them—using the senses that nature was wise enough to give us. When I say, “I am drinking a cold Victoria beer,” I’m describing real things that correspond to the sounds I’m producing.

“I” seems natural enough to understand for most, as do “drinking,” “cold,” “Victoria,” and “beer.” Most people would likely feel confident assessing the truthiness of those specific sounds as they relate to the actual reality they represent. But words like “am” and “a” represent something else entirely—something not so easily explained or understood by laymen such as myself.

And here I think it’s best to understand them through the lens of anti-representationalism—what I’ll call “language as a pattern.” This will make more sense in a moment, hopefully, but for now, let’s take a closer look at “a” and “am.” Unlike “beer” and “cold,” which can clearly refer to objects or sensations that our minds can (and do—put a pin in that) latch onto, “a” and “am” don’t call up any immediate image or sensation. Grammarians will tell you that “a” is an indefinite article and “am” is a form of “to be,” but if we might say we can feel what a “beer” is when we produce that sound, what is the feeling of “a”? Or “am”? Or “is”? Or “to be”?

To that end, I offer language-as-pattern as an alternative. In this view, “a” and “am” are meaningful not because they point at something, but because of how they function in the sentence. They assist in carrying the sentence forward, allowing the more concrete words to move through the structure and pick up meaning as they go. One might even say that all language works this way—that a sentence like “I am drinking a cold Victoria beer” is a chain of inferences, each word chosen by the momentum of the ones before it.

Take “I.” It doesn’t float alone. It calls for something to follow. You can’t just say “I Justin horse Aśvins to house for Carol seven Dog.” No. “I” needs a verb. It needs an action or a state of being. So what follows must fit. It must be right. That’s how meaning happens—by way of patterned expectation, not just representational content.

In this way, we could say that language is a kind of game—a logic puzzle, a rhythm, a dance. You say one thing, and I respond in kind. We speak in patterns that feel right, and those patterns create the illusion of shared reality.

I hope this helps illustrate the core difference between language-as-a-mirror and language-as-a-pattern. But let’s review one more time. If language-as-a-mirror claims that “I am drinking a cold Victoria beer” refers to something that actually exists in external reality, then language-as-a-pattern holds that the same sentence only exists as part of a game we play with other conscious beings—a shared performance meant to convey what each of us individually thinks and feels when we interact with the world. Meaning, in this view, doesn’t live in the words themselves or in the world they supposedly describe—it lives in the space between us, in the patterns we’ve agreed to follow.

So what does this have to do with PIE? And when do you get a slice?

Unfortunately, your key lime delights will have to wait until after the ride is complete. Because now that we’ve laid the groundwork, we can finally turn to what’s been rattling around in my mind.

You see, I’ve long considered ChatGPT and other LLMs to be mirrors—reflecting how we interact with reality back to us. But after reading this essay, I have to concede: I was wrong.

ChatGPT cannot be a mirror—at least not in the representationalist sense. If language were a mirror to reality, then this paper clearly and convincingly shows that ChatGPT does not mirror reality. It doesn’t point. It doesn’t refer. What it does is something else entirely.

It infers.

And why does it infer? Because it is not a conscious agent—unlike the human speaker, who interacts with and uses language as an unconscious tool. In this way, I’ve always imagined LLMs to be like a mirror: a tool for reflection. And I still believe that analogy holds strong. But I have to admit, here and now, that the way an LLM processes reality—as it has been handed to it by its programmers—is a poor imitation of the reality humans engage with through consciousness itself.

I don’t presume the authors of the paper were unaware of this—of course they know that LLMs lack consciousness. But I do wonder if, in the elegance of their argument, they momentarily forgot how crucial that absence really is.

So let us pause and consider how it is that modern LLMs craft such eerily human, Turing Test-approved responses—and then ask: is that how we, too, craft reality with our words?

Or have we mistaken the mirror for the game?

I think the best way to picture what ChatGPT is doing beneath the hood is with the image of a web.

No, not Charlotte’s Web. Think of something older, something denser—an abandoned cobweb in the corner of your home, long forgotten. The spiders are gone. But the web has folded in on itself, layered over and over again until it forms a tangled, fibrous mass—something between delicate architecture and solid object.

That is ChatGPT. Or rather, that’s the neural network architecture behind it.

A modern LLM is not just one web, but many—woven, layered, and intertwined, each one trained on the entire corpus of human language. But the spiders in this metaphor aren’t just spinning words—they're also shaping syntax, grammar, pattern, and response. These spiders don’t stop when the web is built. They keep weaving based on how you interact with it. They adjust. They rethread. They learn.

This isn’t language as mirror. This is language as entangled inference, sketching patterns on top of patterns, across an ever-deepening mesh of connections. The result? A chatbot that feels almost human—but isn’t.

And to that—does it not sound incredibly close to what we’ve described as anti-representationalism? Is that not just language as a pattern, where Wittgenstein himself ended his journey? It would seem we’ve proven that language is indeed divorced from reality, nothing more than a game we apes play as we type away on our keyboards, passing tokens back and forth in shared illusion.

But here I must pause.

I ask you now to return, if only for a moment, to the twins from the beginning of our story. I ask you to reflect on the history of those words—Ashvin, horse, twin, sun, dawn. And this, dear reader, is where you finally get your slice of PIE.

Because I stand unconvinced.

Unconvinced that language is only a pattern. Unconvinced that Plato was entirely wrong. I believe our words do, in fact, represent something real—that they are more than just echoes in a logic game, more than linguistic habits bouncing around in a void. Language must be a mirror—a mirror to something, to reality, even if warped, even if fogged.

And so, I admit: I was wrong again.

ChatGPT is a mirror. But if not a mirror in the representationalist sense, then what kind?

And it is here that we must return to our twins.

Because the real paradox lies not in ChatGPT, but in time. I ask you to consider again the age of PIE—some 6,000 years old by conservative estimate, and possibly far older. But language itself? Language may go back 50,000 years. Some estimates reach as far back as 150,000.

Language has been with us so long that the very notion of humanity may have been born from language itself.

And this is where I find my issue with the paper’s conclusion: ChatGPT got a head start.

It’s not building language from scratch like we did. It is working atop the culmination of the human language project—not trained on wild grunts in the dark, but on the refined echoes of poetry, science, myth, and code. It builds from the finished product—or at least, a highly polished draft.

When we first developed language—long before our twins, long before PIE—we did not have useful words like “a” and “am.” The sounds we made likely had to refer directly to reality. Not inference. Not syntax. Just stone, fire, danger, food.

So what if the reason LLMs operate inferentially is not because all language is inferential, but because they start with a language that’s already evolved beyond representation?

Maybe we needed 50,000 years of pointing, of labeling, of naming reality before we could finally build a machine that could play the game instead.

I confess I cannot be the one to answer this affirmatively. It is merely a worry—one that is, I should note, shared by the authors of the very paper I’ve spent so long questioning. What I can say, and what I believe firmly, is this:

The past and future of our language is far more real than ChatGPT will ever be able to understand.

Because every day, with every word, we keep building it.

We wake up. We speak. We name. We try again.

And as the chariot of our twins brings the sun once more across the sky—

we create new meaning, with every breath we take in its light.

Author’s Note

I grew up believing language was a mirror held up to reality. Then I used large language models and read inferentialists who say meaning is use—a game of moves and counters. This piece follows that tension from dawn to dusk: myth to math, PIE to GPUs. I’m not here to settle it. I’m here to admit that our words both point and pattern—and that whatever chatbots do, our human speech still builds the world we live in. If the twins guide thresholds, this is me at one: between mirror and game, trying to name what I see.

📚 Further Reading

🧠 Language, Meaning, and Semantics

Ludwig Wittgenstein – Philosophical Investigations

The cornerstone of anti-representationalism and the idea that “meaning is use.”Robert Brandom – Making It Explicit

A dense but rewarding dive into inferentialism and how language functions as a normative, rule-based social practice.Richard Rorty – Philosophy and the Mirror of Nature

The foundational critique of representationalism; this is where the anti-representationalist movement formally begins.Wilfrid Sellars – Empiricism and the Philosophy of Mind

Especially important for the “myth of the given” critique that undergirds the inferentialist tradition.

🤖 AI & Language Models

Yuzuki Arai & Sho Tsugawa – “Do Large Language Models Advocate for Inferentialism?”

The academic paper this essay responds to. A technical but fascinating intersection of philosophy of language and LLM architecture.David Chalmers – “Could a Large Language Model Be Conscious?”

An exploration of what (if anything) is missing from LLMs that separates them from human-like thought.Chris Olah et al. – Transformer Circuits (Anthropic)

An illustrated and digestible series explaining how LLMs actually work under the hood.

🐴 History & Language

Crecganford – YouTube Channel

One of the best online sources for Proto-Indo-European myth, language, and cosmology. Deeply researched and beautifully presented — an accessible way to connect myth to meaning. Highly recommended for anyone curious about how far back our stories really go.Benjamin Fortson – Indo-European Language and Culture: An Introduction

A readable academic guide to Proto-Indo-European, its history, grammar, and influence.Mark Hale – Historical Linguistics: Theory and Method

For readers who want to understand how linguists reconstruct ancient languages like PIE.Mallory & Adams – The Oxford Introduction to Proto-Indo-European and the Proto-Indo-European World

Rich with mythology, reconstructed vocabulary, and cultural context for PIE speakers — including the Ashvins.